Two Unrecognized Hall Of Fame Shortstops

This article is dedicated to the memory of the late Clem Comly, who did more than anyone to put together the Retrosheet.org public database of baseball statistics that made this article and all Internet baseball encyclopedias possible.

The farewell tour of baseball's most admired player got me thinking over the past few months about how the Baseball Writers' Association of America (BBWAA) chooses which shortstops will be inducted into the Hall of Fame. (Players not voted in by the BBWAA get a second, much-delayed chance with the so-called Veteran's Committee, which has often shown very poor judgment in selecting—or failing to select—players passed over by the BBWAA.)

The BBWAA has voted in just six post-integration players who either played most of their games or had their most valuable seasons at shortstop 1: Luis Aparacio, Ernie Banks, Barry Larkin, Cal Ripken, Jr., Ozzie Smith, and Robin Yount. So it seems there have been three different ways for a shortstop to get voted into the Hall of Fame by the BBWAA.

Reach a career batting milestone (3,000 hits or 500 pre-steroid-era home runs) that guarantees induction regardless of what position you played.

Amass more innings at short than any of your peers at short and own a reputation as the outstanding fielding shortstop of your time.

Retire with a career batting average near .300.

In the first category one finds Banks (512 home runs), Ripken (3,184 hits) and Yount (3,142 hits). In the second category one finds Aparacio (most defensive games at short upon retirement and 9 Gold Gloves) and Ozzie Smith (second-most defensive games at short upon retirement and 13 Gold Gloves). In the third category, one finds Larkin (.295 career batting average).

There are, though, shortstops who belong in the Hall of Fame—who were just as good as, or better than, some of these players—but are not in because they didn't satisfy any of these three rules of thumb relied upon by BBWAA voters. These shortstops perhaps didn't have exceptionally high batting averages, but were pretty good at drawing walks and/or had some power and/or stole bases with a high success rate; they didn't have the longevity to reach 3,000 hits, but in their prime were just as valuable to their teams as a Hall of Fame quality player should be; they maybe weren't quite as good in the field as Ozzie Smith, but were solid or pretty good or even better than that.

There are a few baseball encyclopedias/websites on the Internet that can improve our assessment of players who are pretty good at a lot of things rather than good at just one or two things. At least one of those websites also provides a simple way of comparing players with long careers with players who didn't play as long but who were better during their prime.

These websites measure the many elements of a baseball player's value—power hitting, getting on base, baserunning and fielding—and combine everything into one number. This is what wins above replacement, or WAR is: an estimate of the wins created by a player relative to what a replacement-level player would have created. (A replacement-level player is usually defined as a player right on the line between making and not making a major league roster.)

One of the websites also rates overall careers using a simple formula proposed by Jay Jaffe: just take the average of a) a player's career WAR and b) the sum of his WAR for his seven best seasons (not necessarily consecutive). The resulting number is known as the Jaffe WAR Score system, or JAWS. Thus the best seven seasons of a player's career are treated as twice as important as his other ones, which even for most Hall of Famers are not impressive.

One limitation of WAR is that no version currently published on the internet is fully open-source—certain parts of WAR are black (or opaque) boxes 2. The other difficulty with WAR, probably somewhat related to the first, is that many BBWAA voters are still not that comfortable with it, as demonstrated by the fact that several players whom WAR shows to be overwhelmingly qualified for the Hall of Fame haven't made it in—and not just those players held back by issues related to the use of performance-enhancing drugs. The fact is, most voters still focus on traditional statistics like batting average, hits, home runs, runs batted in, Gold Glove awards, etc.

So instead of running through the various published WAR numbers, I'm going to begin our hunt for unrecognized shortstop Hall of Fame candidates using an open-source statistic that yields a number that should resonate with sportswriters and fans generally: Gross Production Average (GPA), invented by sportswriter Aaron Gleeman just over 10 years ago.

GPA expresses a player's overall rate of production as a hitter with a number that looks like the oldest and best-known baseball statistic of all: batting average, which unfortunately ignores walks and extra bases. GPA is simply a weighted combination of on-base percentage and slugging percentage: (.45 × OBP) + (.25 × SLG). 3 It has a virtually perfect correlation with another rate statistic derived from the exact relative average value of each batting event. 4

A GPA of .260 is a little above average, just like batting average. Players with .220 GPAs usually don't keep their jobs, unless they are extraordinary defensively at a tough defensive position like shortstop. For example, Adam Everett, one of the greatest-fielding shortstops of all time, had a career GPA of .219. A GPA of .300 is just about as rare as a .300 batting average; a single-season GPA of .340 usually is close to league-leading, though with the recent collapse in offense, that has come down some. The highest career GPA among active players belongs to Pujols (.328). Since Pujols' career batting average is slightly lower (.317), his GPA indicates that his ability to draw walks and/or hit for extra bases is proportionately even better than his ability to hit for a high average.

(League GPAs have varied a fair bit since Jackie Robinson's time, so I'll normalize all GPAs reported hereafter to the average GPA in the American League during the last 20 seasons: .258.)

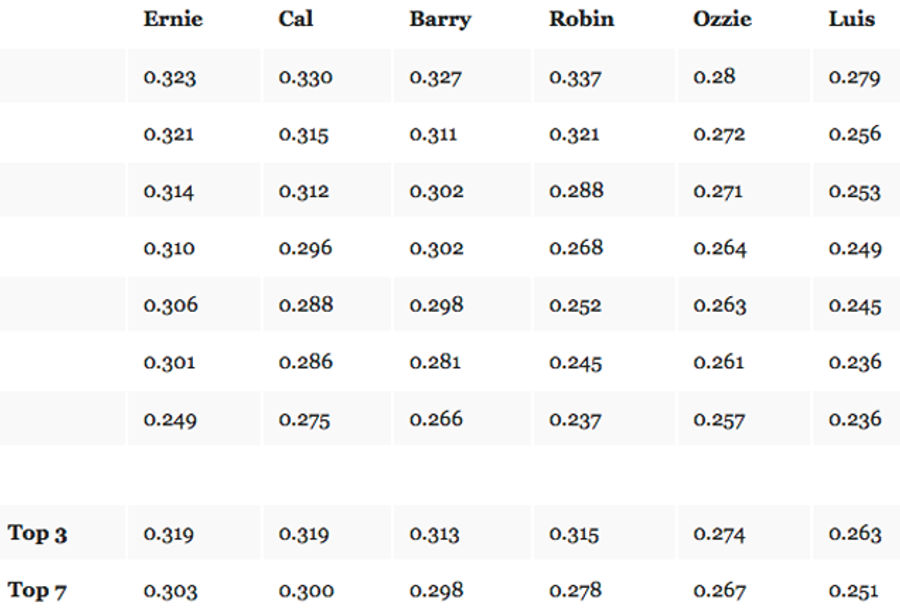

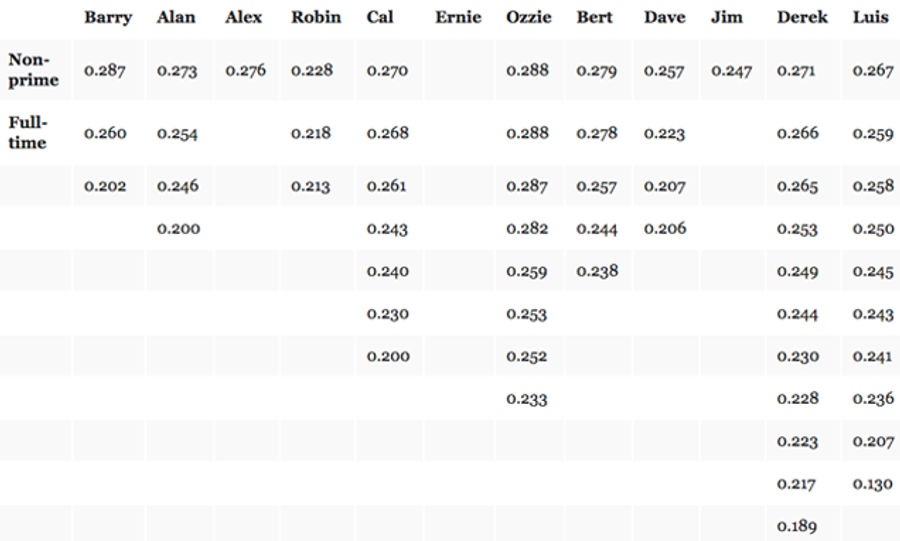

Let's compare our Hall of Fame shortstops using GPA. Consistent with the JAWS definition of a player's prime, we'll show the top seven (not necessarily consecutive) single-season GPAs solely for seasons in which each player was playing shortstop full-time and had enough plate appearances to qualify for the batting title. We'll also show the average of each player's top three seasons, representing his clearly established peak performance, and top seven GPAs, representing his average prime performance.

Banks, Ripken, Larkin, and Yount had an average top-3 GPA of .317, which is coincidentally close to the average GPA of .318 for the seasons in which they finished in the top 5 in MVP voting. Since a league-leading GPA in most seasons is usually significantly higher than .318, MVP voters have been, quite correctly, giving shortstops extra credit for fielding such a tough position. Yount's top-7 GPA is a little low; he had most of his best hitting seasons playing center field and got into the Hall in large part because of those seasons.

So it seems that a shortstop with no-doubt, Hall of Fame-quality batting has about a .300 GPA during his prime. In the lesser seasons of his prime his GPA is usually between .280 and .300, probably good enough to be nearly the best hitter playing shortstop, and close to a .320 GPA at his peak, when he is a clear MVP candidate and among the top handful of the best players at any position. If you are widely seen as the undisputed greatest fielding shortstop of your time, then you can get away with something close to an average top-7 GPA of .260 and a .270 peak GPA.

This raises a question. It's a truism that players who are great at one or two things tend to be more appreciated than equally good players who are good at everything. Might there be players who could fill that gap here, with long careers playing shortstop, okay-to-good fielding, and prime GPAs close to .280 and peak GPAs near .300?

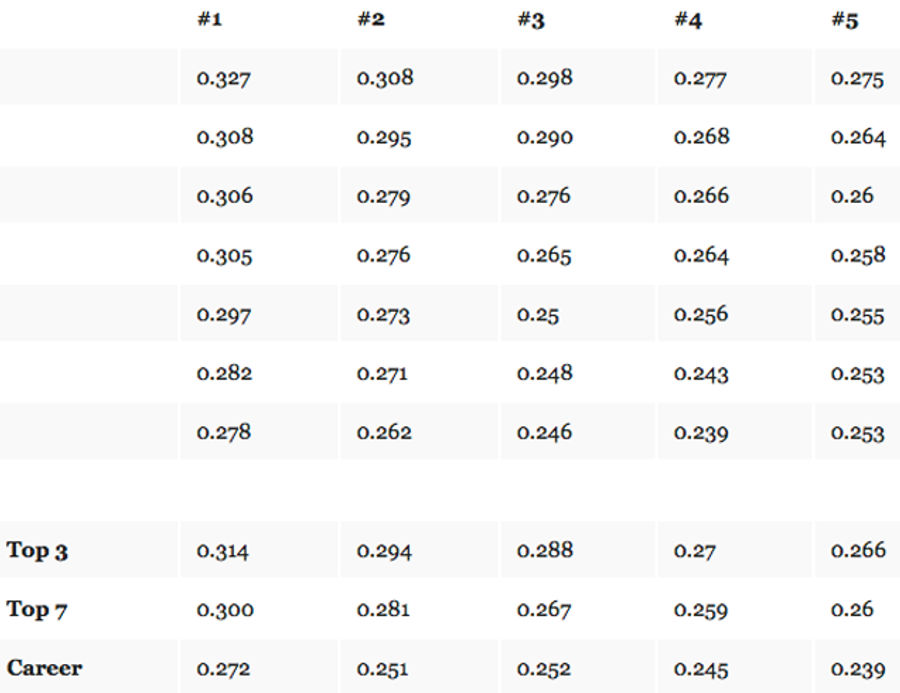

To identify using GPA unrecognized, potential Hall-worthy shortstops, let's do the same GPA calculations we did above, but for all post-integration shortstops not yet in the Hall who 1) have not yet come up for a vote with the BBWAA or who have and at least attracted enough votes to stay on the ballot for multiple years, and 2) played at least 2,000 defensive games at shortstop (and thus were presumably at least decent fielders). Each of the five meeting these criteria also won more than one Gold Glove at short. Here we will show career GPAs, too, because all of these players played essentially their entire careers at short.

We have clearly found a serious candidate here—player #1. He Alan Trammell.

Trammell had essentially the same top-3 and top-7 GPA as Banks, Ripken, Yount, and Larkin. Based on an open-source fielding evaluation system we'll be discussing shortly, he also saved more runs in the field than any of those three. Trammell was actually very close in overall value to Larkin.

Larkin is probably in the Hall because his lifetime batting average of .295 was close enough to .300 for him to be perceived as basically a .300 hitter, and perhaps also because he once hit over 30 home runs back when that was still considered miraculous for a shortstop.

Trammell is probably out (so far) because his .285 lifetime batting average, though very high for a career shortstop, wasn't close enough to .300 for him to be perceived as a so-called .300 hitter, and because he never hit 30 homers, though he did hit 28 once.

(If this seems reductive, take it up with the baseball writers.)

It's very important to keep in mind that the above GPAs are normalized to the level of offense in the American League during the past 20 years. Trammell's official statistics look more modest than Larkin's because all of his full-time seasons preceded the offensive boom that began in 1993, while most of Larkin's full-time seasons occurred after 1992.

Alan Trammell, 1989. Photo by Otto Greule Jr./Getty Sport

Trammell is still on the Hall ballot, though he has never attracted more than half of the 75% of votes needed for election and is now down to 21%. He has only two years left of eligibility: the BBWAA must vote him in next month or this time next year, or else he will have to wait many more years to be considered by the unreliable Veteran's Committee.

It looks like we may have found a second unrecognized shortstop Hall of Fame candidate as well, and this one might be the guy who can fill the "gap" shown above between the shortstops who are Hall of Famers because of their hitting and those who are in because of their fielding reputations.

Player #2 has a peak GPA just under .295 and a prime GPA just over .280. Before revealing his identity, let's look at Numbers 5, 4 and 3.

Number 5 is Omar Vizquel. As a hitter, he has been similar to Luis Aparacio, but nowhere nearly as valuable a base-runner. Furthermore, as discussed at length in my recent book, Wizardry: Baseball's All-Time Greatest Fielders Revealed, Omar was not as good a fielder as Luis and not even close to Ozzie.

Wizardry introduced the first and still only open-source statistical model for estimating runs "saved" or "allowed" each season by fielders (and pitchers independent of fielders) throughout major league history: Defensive Regression Analysis, or DRA. When I say DRA is an open-source model, I mean that it is based solely on publicly-available statistics and fully-disclosed modeling of such statistics. Both the data and the math are openly available, so DRA can be verified or corrected by others, like any other scientific model.

For those of you with some familiarity with baseball statistical analysis, DRA is to defense what Pete Palmer's "Linear Weights" is to offense.5 Like Linear Weights, DRA estimates the impact of each fielder and pitcher using so-called "linear" equations, derived from standard tools of statistical inference.6

I don't want to get too deep into DRA here; I'm just saying that Vizquel avoided errors but didn't prevent that many more ground balls from going through the infield for hits that an average-fielding shortstop would have. This should not be too surprising, given that in his long career, in which he played 136 or more games at shortstop 16 times, he never led the league in shortstop assists. He was among the top 3 only once. Vizquel shouldn't even be close to consideration for the Hall, but I predict he will attract many votes and may stay on the ballot for quite some time, because he perfectly satisfies the second rule of thumb used up to now by the BBWAA for choosing shortstops for the Hall.

Number 4 is Dave Concepcion. He appeared on as many as 17% of BBWAA ballots. A journalist at The Economist magazine, Dan Rosenheck, has presented a great deal of evidence that the replacement level for shortstops in Concepcion's prime (the 1970s) was at an historic low. This would suggest that his value compared to replacement-level shortstops of his time was greater than shown on WAR websites. In addition, DRA (particularly the post-Wizardry version of DRA) indicates Concepcion's fielding is undervalued by the WAR websites, so an open-source version of WAR applying Rosenheck's analysis of replacement levels and DRA on defense could demonstrate that Concepcion was closer to an MVP-level player at his peak and an All-Star quality player during his non-peak prime.

Number 3 is Edgar Renteria. A 5-time All-Star, he once placed as high as 15th in an MVP vote, in 2003, when he had his career-high GPA of .299. Though he won a couple of Gold Gloves, he was actually a below-average shortstop as measured by DRA. I don't see a valid Hall of Fame case.

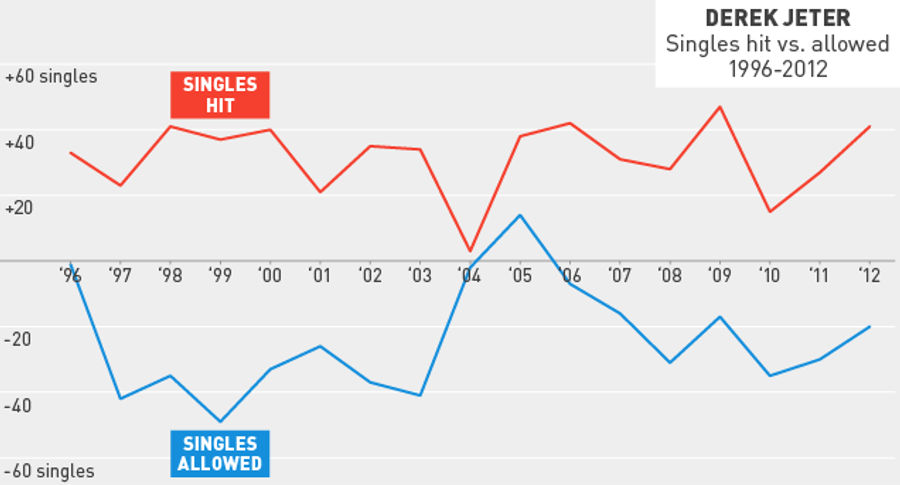

Number 2 is the most famous #2 in sports history. But #2 is not Derek Jeter as defined by the official, formally recognized statistics—Derek Jeter de jure—whose annual BA/OBP/SLG slash-lines would have yielded single-season GPAs right in line with our slugging shortstop Hall-of-Famers. That Derek Jeter was slightly below average at hitting doubles and home runs, about average at drawing walks, slightly above average at avoiding strikeouts ... and sensational at punching out singles to the right side of the outfield.

No, #2 as shown above is the unrecognized, the actual, real, effective Derek Jeter—Derek Jeter de facto—who was everything Derek Jeter de jure was, but also "sensational" at allowing singles to scoot through the left side of the infield.

Derek Jeter struggles on defense. Photo by Bob Levey/Getty Photos

Derek Jeter de facto is Derek Jeter de jure with the singles he allowed subtracted from the singles he hit.

In the next part of this article, we'll walk through the latest DRA estimate, showing Derek Jeter made approximately 450 fewer successful fielding plays that an average-fielding shortstop playing in his place would have fielded and converted into outs. Jeter played 2,674 defensive games at short. A player playing nearly every game per season, say, 150 games, plays 6 games a week, so Jeter played close to 450 "weeks" at short.

The easiest way, then, to put Derek Jeter's defense into perspective is to recognize that he allowed, on average, about an extra single a week throughout his career: something that nobody could ever notice and keep track of in their head simply by watching him play.

And, as we shall see, with the exception of his first full-time season and a highly intriguing interlude of three seasons at the beginning of the second half of his career, Jeter's rate of allowing more singles than an average shortstop would have each season was almost exactly the same and almost exactly as consistent year to year as his rate of hitting more singles than an average batter would each season.

Does our heretofore unrecognized Derek Jeter de facto belong in the Hall? If we subtract the approximately 450 singles he allowed from his official hit total, he had just over 3,000 hits. There is no precedent for the BBWAA not voting in a 3,000-hit player, regardless of position, and here we are considering a player who played the second-most defensive games ever at the most difficult fielding position, and who fielded that position perfectly adequately. Remember: Derek Jeter de facto is by definition a perfectly average-fielding shortstop because we've shifted all the singles he allowed over to his batting statistics.

I would contend, however, that BBWAA voters should not automatically vote for or against a Hall of Fame candidate simply because he reached or failed to reach a certain career hit total. It establishes bad incentives for players (two or three I can think of) to stretch out their careers when all they are doing is preventing younger and better players from getting a chance to play. And hit counts are a very imprecise measure of overall value. There a few players in the Hall who had over 3,000 hits but did not have the impact in their peak or prime seasons of a great player because they didn't draw enough walks or hit for power or contribute enough on defense.

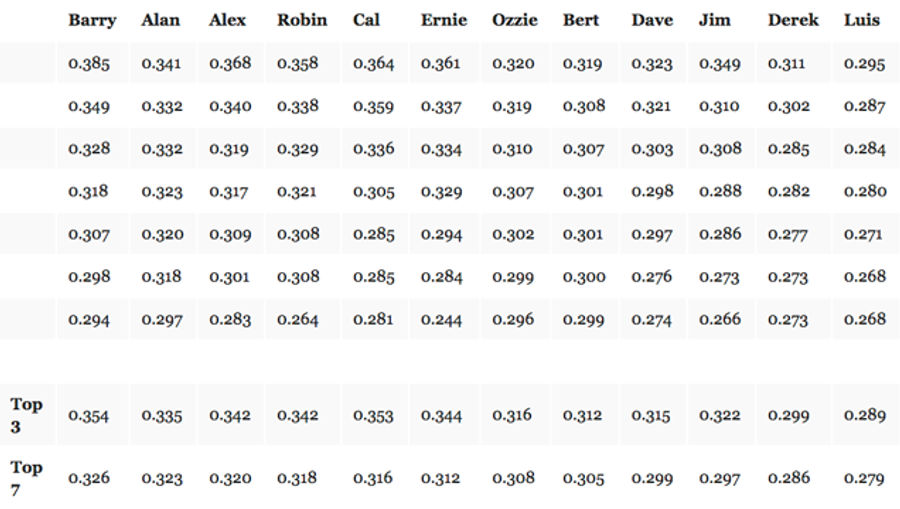

I think the best way to put the career of Derek Jeter into perspective is do the same thing for several other post-integration shortstops that we did to discover Derek Jeter de facto. In other words, we'll add (or subtract) the latest DRA estimate of the singles those shortstops saved (or allowed) fielding shortstop each season in which they had enough plate appearances to qualify for the batting title and also played short full-time. We'll also give every player credit for his base running by converting the runs they "created" or "allowed" through their base-running as reported by the WAR website, Baseball-Reference.com, into additional (or fewer) singles when recalculating their GPA. And all GPAs will be normalized to the .258 level in the American League during Jeter's career.7

Looking at the numbers below, one should imagine what you would think of each shortstop if he had been Jeter's peer, completely average at fielding shortstop and at base-running, but had the following batting averages, with walks and extra-base-hitting performance proportional to such batting averages:

"Alex" is A-Rod. (Yes, Alan Trammell in his prime was basically as valuable as A-Rod, because Alan was a very good fielder, while A-Rod was erratic but basically below-average. Alan's career was over before performance-enhancing drugs were perfected.) "Bert" is Bert Campaneris. "Dave" is Dave Concepcion. "Jim" is Jim Fregosi. The latter three are no longer eligible for induction by the BBWAA.

There is a bit of "noise" in these estimates because the random variability in singles saved or allowed by a full-time shortstop each season is somewhat (but surprisingly not much) more volatile than the random season-to-season variability in singles hit each season by the typical batter. So I would not put too much meaning into any single-season number above. But the top-3 averages, and especially the top-7 averages, give perhaps the best and most intuitive estimates of the value of the greatest shortstops since integration during the primes of their respective careers.

Looking just at the prime of his career, Derek Jeter de facto is not even one of the 10 best post-integration shortstops. Four post-integration shortstops not in the Hall of Fame were better in their primes than Jeter.

But what about non-prime seasons played at shortstop?

Before reading what I have to say about the non-prime seasons, I invite you to examine them closely and come to your own conclusions about how they should affect Hall of Fame votes. As we all know, A-Rod and Yount had some great non-shortstop seasons. Banks hung on for many years as a serviceable but unimpressive first baseman. Ripken had a few okay years at third, but actually never played at a true all-star-quality level after age 30. Jim Fregosi never played a true full season after age 30. What I would suggest is that Derek had more cumulative value as a full-time shortstop outside of his prime than any of the above players other than Ozzie Smith. However, Derek was really not significantly more valuable outside his prime than Luis Aparacio was during his.

So in choosing between our two unrecognized shortstop Hall of Fame candidates, Alan Trammell and Derek Jeter de facto, would you prefer trading Alan Trammell's top seven seasons for Derek Jeter de facto's top seven, with Luis Aparacio's non-prime value thrown in as compensation?

I would not take that offer. I would gladly trade Fregosi for Jeter, and probably Concepcion for Jeter. (Though remember that Dan Rosenheck showed that replacement level at short was lower in Concepcion's era, so I would be open to changing my mind.) I would not trade Campy for Jeter.

Dave Concepcion, 1981. Photo by Stephen Dunn/Getty Sports

Still, Jeter was a greater player than Hall of Famer Luis Aparacio, and close in value to Bert Campaneris, another player whom I believe belongs in the Hall, so I can more than live with Jeter's induction. Moreover, I can think of at least 10 non-shortstops the BBWAA has voted in who were less deserving than Derek.

Of our two unrecognized shortstop Hall of Fame candidates, one is no-doubt-worthy-but-still-waiting, the other borderline-but-no-doubt-first-ballot. Though I hope you can see why Alan Trammell is the former, I can understand why you still can't believe Derek Jeter de facto is the latter. Nor should you, as I haven't presented any evidence yet of the existence of Derek Jeter de facto. It will take a fair bit of number crunching, so I will present it in such a way that you can quickly skim through it and then go back to scrutinize the parts that may still be hard to believe. We'll conclude by explaining

How the Yankees could have been so successful as a team—including on defense—with an historically poor fielder at shortstop and

Why Derek Jeter will continue to be revered by most fans as the greatest career shortstop since integration, but instead should be recognized by the BBWAA for what he really was—a good player who was fortunate to play shortstop as long as he did and for the best team of his time.

The Essential Problem and Basic Solution

Four hundred and fifty singles allowed is a lot. To justify this number requires an analysis more detailed than the average fan or even BBWAA voter is likely willing to wade through. (Of course, you are not an average fan, and I am trying to reach the best and most influential BBWAA writers.) To manage the level of detail, I'll relegate some of the analysis to a technical appendix. There is at least one thing I hope all readers will appreciate: I'm using public data (available at Retrosheet.org) and fully disclosed math, so nobody can dismiss the number out of hand.

Throughout major league history, when a ground ball has gone through the infield without going through someone's legs or being touched, or a ball hit in the air has landed in the outfield before being touched by a fielder, it has almost always been the case that the batter has been credited with a hit and the pitcher has been charged with allowing the hit.

The hit was simply a fact; whether some fielder nearby should have caught it and gotten the batter out an imponderable hypothetical. And if a batted ball was successfully fielded, it was an out charged to the batter and credited to the pitcher. The out was simply a fact; whether the fielder demonstrated extraordinary skill in reaching and fielding such ball might be noticed but never officially recorded as a hit prevented by such fielder.

Since there has never been an official event-by-event count of hits allowed or prevented by fielders, we have to use statistical techniques and probabilistic reasoning to infer that number over some reasonable time horizon, such as a full major league season.

To do this, we have to estimate the expected number he would have made if he were an average fielder at his position, in other words, his "expected plays." Then we simply subtract that estimate from the precise number, which is counted, of the successful plays the fielder actually made. Any net positive difference is hits saved; any net negative difference is hits allowed.

The way DRA estimates expected plays is to start with the initial assumption that fielders at each position face exactly the same number of fielding opportunities, given playing time, as all other fielders at that position. Then, based on large samples (20 years or more) of team seasonal data, DRA estimates—using simple arithmetic and the statistical technique known as "regression analysis"— how much and how reliably various factors not under the control of the fielding position being evaluated tend to increase or decrease plays made by a team at that position over the course of a season. The factors considered depend upon what kinds of data are publicly available. Factors that can be actually counted for each fielder for all but three seasons since 1989 include

the relative number of balls hit into the field of play ("balls in play") (the more total balls in play, the more fielding opportunities, all else being equal, everywhere in the field)

whether they were hit on the ground or in the air (e.g., the more ball in play that are grounders, the more infielder opportunities)

whether they were hit by left- or right-handed batters (ground outs tend to be pulled and outs on balls hit in the air tend to be hit to the opposite field),

whether they were allowed by left- or right-handed pitchers,

the placement of base-runners and the number of outs (which impacts fielder positioning) when each ball in play was hit, and

the identity of the hitter and that hitter's lifetime distribution of outs on balls in play by fielding position (to take into account extreme shifts for individual batters).

An article I wrote for The Hardball Times Baseball Annual 2012 concluded that since 1989, only the first three factors had both a statistically and practically significant impact on net plays made by teams at any position over the course of a season. Part A of the technical appendix goes through the calculations for shortstop, as well as why errors are ignored and why hit prevention by infielders should be measured only for ground balls, not balls hit in the air.

Yes, there are very occasionally outstanding fielding plays by infielders on line drives and Texas Leaguers, but there is no evidence from public data (or even published results from private batted ball location data) supporting the notion that any shortstop has, year-by-year, consistently prevented a significant number of hits that way. Something like 95% of all balls hit in the air that are caught by infielders are high fly balls that could be caught by any major league player fielding the position and usually one or two other fielding positions as well. In fact, DRA credits those outs to pitchers, because in such cases the pitcher has generated an essentially automatic out.

Yes, errors should count—they already are counted in DRA as plays not made—but they should not be counted, as they effectively are in some WAR calculations, a second time as some sort of penalty. Errors at fielding batted balls at short (and basically everywhere) are on average no more damaging to a defense than a clean hit allowed.

Yes, it is absolutely the case that when there is a runner at first, the middle infielders and first baseman have to hug second and first base, respectively, which reduces the likelihood of fielding a ground ball. And when there is a runner at third with less than two outs, infielders have to play in. But the effect in these cases is modest, and the variation across teams in the number of these situations over the course of a season is modest, so it just isn't material in estimating the number of batted ball fielding chances for an infielder (still less an outfielder) per season.

Yes, certain opponent batters are extreme pull hitters on ground balls, which recently led teams to expanded use of extreme shifts similar to the Ted Williams shift. Though it is clear that extreme shifts impact fielding opportunities for the last few seasons, they did not have a statistically and practically significant impact over the 20 years ending in 2011, except arguably at third base. Even now with odd shifts, I don't believe the number of plays by shortstops are impacted that much. And in any event, Jeter played almost his entire career before the recent and growing trend toward extreme shifts.

Given all the above considerations, in general, the best objective, unbiased estimate based on public data of singles "prevented" or "allowed" by a shortstop over the course of a season is simply his net ground out plays against left- and right-handed opponent batters, given the relative number (relative to the league that year) of ground balls hit by opponent left- and right-handed batters while that shortstop was playing. For purposes of the chart below, let's call this the "Basic" estimate. This is essentially the estimate used above for evaluating all of the shortstops shown above other than Jeter. 8

If you do that arithmetic, over the course of his career, Jeter made approximately 730 fewer plays than expected. Given the relative number of opponent left- and right-handed batters, he was roughly equally bad against both. Seven hundred and thirty.

Special Considerations Unique to Jeter

To be fair to Jeter, he has been subject to two highly unusual contextual factors.

First, and this is something I've been mulling over since Wizardry came out, Jeter's pitchers were phenomenal fielders who may have fielded batted balls that might otherwise have reached Jeter. Yankee pitchers were so unusually outstanding that even though the effect is usually too minor to worry about, I estimated using DRA that for each 10 extra ground out fielding plays made by pitchers against left-handed batters, ground out fielding plays at shortstop against left-handed batters go down by about 1. For each 10 extra ground out fielding plays by pitchers against right-handed batters, shortstop ground outs against right-handed batters go down by about 3. I also checked if third basemen have a statistically noticeable impact on shortstop fielding per season; the answer is no, once you take into account pitcher fielding.

Second, and I really have to thank Sean Smith, the creator of the first and still most commonly cited version of WAR, for alerting me to this issue, for some reason there has been a dramatic park effect suppressing shortstop plays at Yankee Stadium (including the new one). Now this is extremely unusual. Sean has previously gone on record to say that parks affect fielding to a significant degree only in left field at Fenway and in Coors at all outfield positions, and that was also my conclusion for recent seasons after writing Wizardry.9

To measure the park effect in Yankee Stadium at shortstop, I compared

the rate of ground outs at short against left- and right-handed batters

after taking into account pitcher fielding

for all shortstops other than Jeter each season from 1996 through 1999 and 2003 through 2012 (Jeter's full-time seasons before this most recently concluded season,10 excluding 2000-02, when we don't have exact counts of ground balls by batter hand),

when the non-Derek Jeters were playing at Yankee Stadium and when they were not.

Part B of the technical appendix shows all the calculations. The effect, which cut shortstop plays for the non-Derek Jeters from 2003 onward by 2% of total ground balls hit by lefties, and 1% for righties, did not take hold before 2000, and could not be calculated the same way for 2000-02 but again, to be as generous as possible, I assumed the post-2003 park factor for 2000-02.

Jeter's Net Singles Allowed

After developing a general model that considered the potential impact of the number of ground balls by batter hand, and by pitcher hand after batter hand, and batter career batted ball distributions, and the presence of base-runners; and after developing just for Derek Jeter further adjustments for pitcher fielding and a park factor (which very few analysts have considered for shortstop defense), we arrive at the following break-down of Derek Jeter's performance net singles "allowed" compared to an average-fielding shortstop. Also shown is the number of singles he hit above or below the league-average rate, given the total number of balls he hit into the field of play (excluding home runs).11

There are three interesting patterns in the numbers above. To be clear, I did not go looking for these patterns or expect to find any patterns at all when I was doing the number crunching. Whatever negative inferences might be drawn from these results were not even imagined when I started this project. Furthermore, I can't completely rule out the possibility that the trends could be due to sheer randomness.

First, Jeter was basically an average-quality fielder in 1996 and 2004-06.

Why might Jeter have been better in the field in 1996 and then again 2004-2006? I can only speculate, but could it have been that Jeter had to establish himself as the Yankee shortstop in 1996 and had to re-establish himself as the shortstop when A-Rod arrived in 2004? I will not say that he consciously decided he could take it easy after he won the job in 1996 and after a perceived competitive threat receded by, say, 2006. But playing shortstop is incredibly physically demanding. A player with exceptional offensive ability might, perhaps unconsciously, avoid making more physically risky fielding plays to stay healthy and in the lineup, and in some cases be more than justified.12 Derek certainly succeeded in staying in the lineup.

We all know of the one case where Jeter did take a fielding dive and got hurt—not to prevent a ground ball hit, but to catch a foul pop fly in the stands. That play occurred in ... 2004. That was also the year of Jeter's first Gold Glove. In fact, the first three Gold Gloves given to Derek were given in his three-year window of solid fielding performance. Is it possible that the coaches and managers who do the voting had some inside information that Derek was stepping up his game?

Second, in all his full-time seasons other than 1996 and 2004-06, Jeter's singles-allowing was almost exactly as consequential and consistent as his singles-hitting.

If you omit Jeter's short seasons and the four seasons in which he was more or less average for reasons that in retrospect seem plausible, the average number of singles allowed each season by Jeter was −32 and the standard deviation in singles allowed was 10. If you look at the same seasons, the average number of singles Jeter hit above the average rate, given the total number of balls he hit into the field was +31, and the standard deviation was ... 10. ("Standard deviation" is a measure of the "spread" in values, and indicates in this case that Jeter's singles allowed from 1997-2003, 2007-12 and 2014 didn't vary any more year-to-year than his singles hit.) The chart below shows how remarkably consistent the pattern was going into his lost year of 2013.

Chart by Reuben Fischer-Baum

Third, Jeter didn't get any help from Yankee pitcher fielding or the mysterious Yankee Stadium park effect until at least three seasons after he consistently demonstrated extremely poor fielding.

During Jeter's first three bad-fielding seasons, Yankee pitcher fielders took away, on average, just one play from Jeter. During the next seven seasons, ending with Derek's last decent fielding season in 2006, Yankee pitchers took away an extra 10 plays per season—a phenomenal total for a seven-year period.

The park effect numbers shown above are actually smoothed out from the raw numbers that appear in the technical appendix, because all analysts smooth out park effects over multi-season periods. The public data doesn't exist to do the park effect analysis for 2000-02, but to be nice to Derek I assume the trend that became apparent in 2003 began in 2000.

The delayed but then sharp and sustained Yankee Stadium park effect and improvement in Yankee pitcher fielding suggest to me Yankee management did begin to realize they had a problem and tried to do something about it.13 Perhaps they signed pitchers who were good fielders or simply urged them to do their best to cover for Derek on ground balls up the middle. Perhaps they tried to manipulate the infield surface with the intention of helping Jeter, but with the effect of reducing plays for all shortstops, including Jeter. Perhaps the infield grass on the shortstop side (or in the gap between third and short and up the middle) was allowed to grow longer, to slow down grounders hit to short. A recent article suggested that Jeter in the first part of his career played relatively shallow, presumably to compensate for a weak arm.

I really can't say what it was at Yankee Stadium that suppressed ground ball plays at short, but it seems to have been a substantial factor and one that did not take effect until at least three years after Jeter took over at short, continued at the new Yankee Stadium, and has remained in effect since then.14 It will be interesting to see if the park effect persists after The Captain is gone.

"Noise"

Yes, there is noise in these estimates of singles allowed. And there is a simple way of thinking about it and estimating it. And when you do, you conclude that the fairest conservative estimate of Jeter's singles-allowed is still close to 420. Furthermore, if you take into account some additional, relatively minor, missing factors, one reaches a conservative conclusion that Jeter's impact on Yankee defense was at least the equivalent of allowing about 460 hits.

We all know that a fair die lands on 6 one-sixth of the time. But the many factors influencing whether the die lands on 6 cause random variation in the number of times 6 comes up. A physicist could probably list half a dozen factors almost without thinking. One intuitive way of thinking about randomness is that it is the effect of a high, sometimes extremely high, number of independent causal factors, each of which has a tiny effect. Therefore, rather than try to track or measure these practically innumerable but negligible factors, you learn to work with the aggregate "random" effect, to live with it.

There is a simple formula for estimating the variance in the number of times 6 is rolled, given a particular number of throws. That formula is the probability of a "success" (rolling a 6), or one over six, multiplied by one minus such probability, multiplied by the number of throws. So let's say you throw the dice 2,000 times. The variance is (1/6) × (5/6) × 2,000, or 278. If you take the square root of that number, you get the standard deviation, which is approximately 17.

In plain English, that means if you rolled a fair die 2,000 times, you should expect to roll a 6 333 times, but about one-sixth of the time you will roll fewer than 316 (333 minus 17) and one-sixth of the time you'll roll more than 350 (333 plus 17). About 2% of the time you'll roll fewer than 299 (333 minus 17 minus another 17) and about 2% of the time you'll roll more than 367 (333 plus 17 plus 17). Just from dumb "luck" (actually, the myriad factors that "randomly" impact each particular outcome).

From 1996 through 2012, the total number of non-sacrifice-bunt ground balls hit per season by an average American League team's opponents, as recorded by Retrosheet.org, was roughly 2,000 (2,026). And about one- fifth of those ground balls, or about 400 (412) were, on average, caught by the shortstop and converted into an out. Twenty percent times 80% times 2,000 is 320, the square root of which is about 18.

So assuming a perfectly average shortstop playing nearly every game faced a perfectly "fair" but random distribution of ground balls, and all we knew was the total number of such ground balls (say 2,000) and the average success rate, we would expect the number of his successful plays to be 18 more or less than 400 more about one-third of the time based upon the random difficulty of the ground balls hit while he was on the field. Stated differently, the random noise would be less than plus or minus 18 plays only about 67% of the time, and less than plus or minus 36 plays about 95% of the time.

That's assuming each shortstop plays with a fair "die" that is tossed the same number of times. But the total number of ground ball "tosses of the die" varies per season by the tendency of pitchers to allow ground balls, and the "die" of ground ball chances at shortstop over the course of a season is "loaded" by the kinds of factors we discussed above: relative number of ground balls hit by left- and right-handed batters, extremely good pitcher fieldings, etc.

In point of fact, Jeter has faced, at least in some seasons, systematically "unfair" levels of 1) ground ball pitching, 2) opponent left- and right-handed batting, 3) pitcher fielding and 4) a mysterious park effect of Yankee Stadium.

DRA adjusts the raw numbers for a fielder so that, given his playing time and all public and objective data, he is treated as facing 1) the same number of fielding opportunity "tosses of the die" and 2) a die that is "unloaded" so it is "fair."

That of course still leaves random variation, in the "fairness" of fielding opportunities, just as there is when you toss a fair die. 15

Above, I noted that if you excluded Jeter's first full-time season and the three seasons after A-Rod joined the team, the standard deviation in his singles allowed compared to an average fielder each full-time season was 10, which was as low as the standard deviation in the singles he hit above average, given his total balls hit into play, which was also 10.16 If you thought I was being a bit too cute picking and choosing seasons, then simply assume Jeter was exactly the same quality fielder throughout his career, or about –25 singles allowed for each of his 18 full-time seasons (1996 through 2012, and 2014). The standard deviation in his singles he actually allowed considering all of his full-time seasons is ... 17. Almost precisely what you would expect if we had created a "fair" die for him to estimate the number of ground balls he "should" have caught each of those seasons.

Note that the "noise" in his career estimate of 460 singles allowed is not 17 multiplied by his 18 full-time seasons, which would create a "standard error" bound of +/− career 306 plays. No, randomness does not grow linearly, but with the square root of chances. Jeter faced approximately 27,000 ground balls in his career. Assuming we've done a fairly good job of making the "die" fair, season by season, the standard deviation in his expected ground out plays over the course of his career would be approximately the square root of 20% times 80% times 27,000, or about +/− 66 plays.

The argument could be made that poor Derek just might have been in the 98th percentile of sheer bad luck in terms of where all those ground balls were hit, so we should subtract 132 from 460 to get "only" 328 hits allowed. But there is little reason to think Derek couldn't have been in the 98th percentile of good luck, in which case he'd have 592 hits allowed.

Most importantly, we must bring to mind one huge factor almost no one ever thinks about. Offensive numbers are also subject to random variation.

For example, Derek Jeter de jure hit about 9,400 balls into the field of play (at-bats minus strikeouts minus home runs plus sacrifice flies) and was credited with close to 2,600 singles scooting through or dropping in. So his rate of singles per ball in play was about 28%. Using our old formula we get a standard error of +/− 44 singles hit, two-thirds of the standard error of +/− 66 singles allowed.

So in the interest of "fairness," since nobody does any discounting for the randomness in Derek Jeter de jure's singles hit, I would think that we as baseball fans should be discounting only the amount of randomness in Derek Jeter de facto's career singles allowed (approximately +/−66) that exceeds the amount of randomness in Derek Jeter de jure's career singles hit (approximately +/−44). This is just +/−22 hits per standard deviation.17 If you subtract these two standard deviations (44 hits) from 460, you get 416. But that is the lowest reasonable limit given the objective, publicly-available data we have and how we treat random variation in offensive categories.

Finally, I have left certain things out that would push that estimate of about 420 hits allowed effectively back over 460, in fact closer to 500.

We have not counted Jeter's below-average performance in completing double plays by making the pivot assist, worth about another −15 hits allowed over his career. (I'll spare you the calculation of that.)

We've included Jeter in the calculation of league-average performance. Everybody does this because it's tedious to eliminate each player's data from the league data when trying to measure him relative to his league. But in a real sense, particularly with someone as bad as Jeter, his own numbers pull down the league total by, say, −25 hits allowed per season. With 14 American League teams per season, that's −25/14 hits per season, which multiplied by 18 full-time seasons played by Derek, is another −32 hits allowed.

And we also gave Derek two breaks with the Yankee Stadium park factor. It was actually positive +27 hits over his first four seasons (1996-99), but erratic enough that I zeroed it out. And we gave him a boost in 2000-02, when the calculations could not be made because of the lack of exact counts of ground balls by batter-hand, of +39 hits, based on what happened in 2003 onward. 1999 was extremely positive and 2003 clearly negative, and we have no idea whether the change occurred in 2000 or 2001 or 2002, so there is no clear evidence we should be giving Derek back +39 hits in 2000-02.

So if you start with the conservative estimate of 416 singles allowed, and charge back another 15 for double plays, another 32 for being compared partly against himself, another 27 for a possibly favorable Yankee Stadium park factor in 1996-99, and another 39 because I don't have the evidence for a park factor for 2000-02 but just wanted to be nice, we arrive at 529 hits allowed. Even if we leave the park factors alone, adjusting for double plays and for being compared against himself makes up for the randomness discount, and basically gets you right back to 460.

If that number still seems inconceivably large, remember it is the equivalent of just one single allowed per week by the shortstop with the second most games played at short in history.

Conflicting Estimates of the Impact of Jeter's Fielding

Four hundred sixty singles allowed is the equivalent of something like 340 defensive runs allowed. 18 Baseball-Reference.com shows only 246 defensive runs allowed. I'm not sure exactly what the estimate is at Fangraphs.com, but it is probably similar.19 Those approximately hundred runs make the difference between Jeter being a clear (though not first-ballot) Hall of Fame quality player and being a borderline-quality Hall of Famer. The technical appendix provides the unavoidably intricate explanation as to why the estimates of Jeter's singles allowed shown on leading websites, which come out of black-box or opaque-box systems, yield the smaller number and why the DRA estimate, particularly on a career basis, is likely significantly more accurate.

The short answer is that some of the estimates use clearly biased math, and public evidence has been presented that, at least for most of Jeter's career, batted ball location data (proprietary for all but three of Derek's seasons) was probably biased by the coders to compress all fielder ratings, thus causing poor fielders like Derek to be overrated and excellent fielders to be underrated.

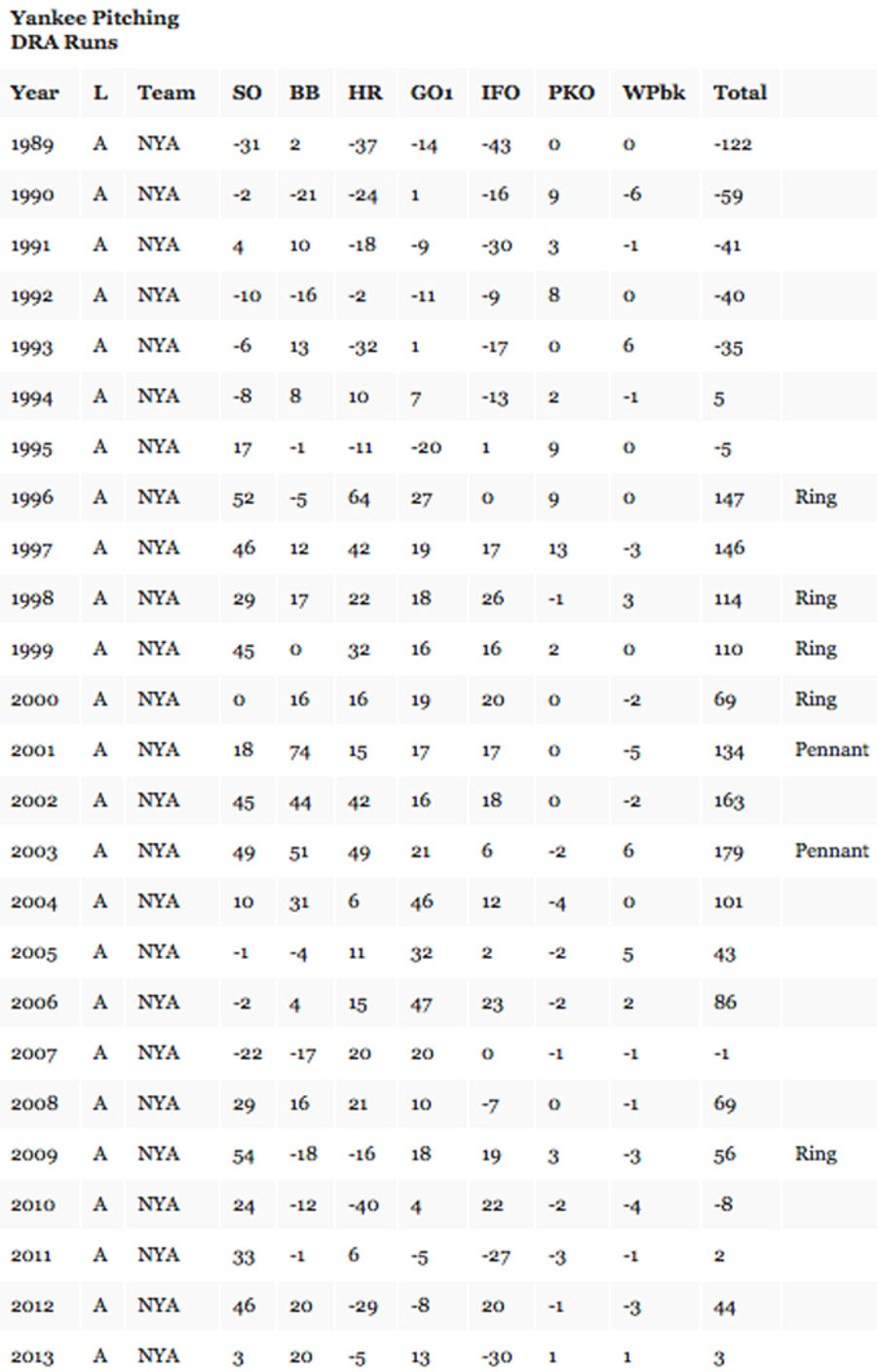

Because Rings!

Jeter has always been a "winner." Every one of the 19 teams for which he was the actual or expected starting shortstop on Opening Day had a winning record. Sixteen of those teams made the playoffs. Seven won pennants. And five won the World Series.

This is very simple to explain using DRA. Recall that DRA also evaluates the run saved and allowed by pitchers. DRA evaluates pitchers, both individually and as a team, based on their relative number of strikeouts, non-intentional walks and batters hit by pitch, home runs allowed, ground balls fielded themselves, infielder fly outs (because they are nearly always weakly hit balls that are automatic outs not creditable to fielders), wild pitches and balks, and base-runners picked off. Each event has a run-weight derived from DRA (and quite similar to the Linear Weights counterparts on offense) so that we can break out the runs saved and allowed by pitchers for each of these events separately and then add them all up to find overall pitching value.

Two pitching staffs over the past quarter century have stood head and shoulders above all others over extended periods of time. The first one everyone knows about: the Maddux-Smoltz-Glavine Braves. The second? The New York Yankees ... beginning precisely in Derek's first full-time, Rookie of the Year season.

Yankee pitching had been terrible in 1989, below average in 1990-93, about average in 1994-95, and then suddenly and utterly outstanding Derek's first full-time season, and the next eight thereafter, with only a minor blip in 2000.

Baseball analysts have known for at least 30 years that every 10 runs a team creates on offense or saves on defense over the course of a season is associated with approximately 1 extra win. Except for 2000, Yankee pitchers were saving between 101 and 179 runs per season from 1996 through 2004, good for approximately 10 to 18 wins per seasons.

Assuming the 1996-2004 Yankee non-pitchers were all completely average in overall value on offense and defense, Yankee pitchers would have gotten the team to 91 to 99 wins every year except 2000. Carrying one player who was above average in overall value, even if not a consistent All-Star, would not hold back such a juggernaut. And the rest of the team could hit a little, too. (Note that I'm awaiting the release of 2014 open-source Retrosheet data to calculate all of the elements of Yankee pitching DRA runs, and a slightly different DRA model is used for 2000-02 because of the reduced amount of data available from retrosheet.org, and that model did not include runs for runners picked off by pitchers, which is trivial in the overall scheme.)

Why Jeter Will Still Be a First Ballot Hall of Famer

You have a good shot at success if you "make your numbers," that is, you produce the numbers that are asked of you. But to be an astounding success usually also requires nearly perfect timing. Derek Jeter produced all the numbers anyone ever asked of him with the best possible timing.

From the very beginning of his career, Derek Jeter churned out .300 batting averages. His end-of-season career batting average never fell below .300. In spite of all the new statistical thinking that has taken over baseball, which shows that batting average accounts for only about half of total offensive effectiveness, and of course tells you nothing about defensive value, the number "300 still captivates fans and sportswriters. That is why this article has translated everything Derek Jeter (and other leading post-integration shortstops) did on the field into a number that looks and scales like batting average.

If you adjust the official career batting average of Derek Jeter for the fact that batting average overrates the one and only part of batting in which Jeter excelled (hitting singles), his true batting average, that is, his GPA, would be .280. And if you further adjust that true batting average for the singles he allowed, it would fall to .252. Even if you boosted his true batting average for his base-running value, it would only be .258, which was coincidentally exactly the average plain GPA in the American League over the course of Derek Jeter's career.

Stated as bluntly as possible, Jeter was, on average over the course of his career, exactly as valuable as

a perfectly average player on offense (compared to hitters at all positions), who also

played the position requiring the most fielding skill (shortstop),

fielded that position as well as the average fielder at that position would, and

showed up nearly every day for seventeen regular seasons and the equivalent of one full season during the post-season.

In his best handful of seasons he clearly performed at a no-doubt all-star level. For most of his other seasons he was clearly an above-average player (and thus far better than a replacement level player). He was extraordinarily durable season after season, and had one of the greatest post-season careers in baseball history. If you'll pardon the pun, he's a defensible choice for the Hall of Fame.

The reason that his true net value has not been recognized is simply that the one thing he was great at (hitting singles) not only officially counted, but was over-weighted by the most well-known baseball statistic (batting average), while the one thing that reduced his value (allowing singles) has never been counted officially and, until DRA came along, never reported season-by-season over the course of his career using solely an open-source statistical model.

Now, about Derek's timing.

As shown above, his first starting season, 1996, exactly coincided with the transformation of the Yankee pitching staff from mediocrity to utter and extended dominance.

By the morning of October 27, 2000, Derek Jeter owned four World Series rings and a glittering .322 career batting average. He had just been named the MVP for the 2000 World Series, the first Subway Series since baseball's so-called Golden Age of the 1950s. He was the Prince of the City during a new Golden Age—indeed, the last Golden Age of America.

I think we can all agree that things outside of baseball have not gone so well since October 2000. Within the baseball world, Moneyball accelerated the shift to non-traditional statistics, such as on-base-percentage, WAR, and new estimates of fielding value. Teams began to hire PhD statisticians and purchase proprietary fielding data to value players more precisely. Various experts using proprietary fielding data began to report that Jeter's fielding was the worst among full-time shortstops.

But millions of fans had already fallen in love with Derek Jeter at that golden moment before things got so ... difficult. They never let that love go. They've passed it onto their kids. After watching dozens of those fans, not-so-young and young, light up when meeting Derek in a recent commercial, shot in Yankee black-and-white in the shadows of the elevated IRT, I don't want them to let that love go. I will be happy for them when Derek is inducted into the Hall of Fame.

Yet I also want the BBWAA to give Alan Trammell his due. In his prime, he made as great a contribution to his teams as any shortstop since the Second World War. It just wasn't encapsulated in a traditional .300 batting average, and it was made in Detroit in the '80s, not New York circa 2000. So Alan wasn't paid hundreds of millions of dollars in salary and endorsements. He didn't (at least as far as I know) date Maria Carey, Jessica Alba, Scarlett Johansson, Jessica Biel, Minka Kelly, and a half-dozen other gorgeous models and actresses. Nobody ever gave him a Roberto Clemente award for "combining good play and strong work in the community." He hasn't been acclaimed as the 11th Greatest World Leader by Fortune magazine.

I understand why baseball fans fell in love with Derek, and why all those actresses and models have fallen for him, and why the editorial board of Fortune magazine exalts him as a World Leader, and why the BBWAA may honor him as the first unanimous first-ballot Hall of Famer. All I ask of the BBWAA is that they find it in their hearts to show enough love for Alan Trammell when they fill out their ballots next month and, if necessary, 13 months from now, so that Alan may welcome Derek to Cooperstown on July 26, 2020.

Michael Humphreys lives in Palo Alto, California, and advises on international tax matters at Ernst & Young LLP. He is the author of Wizardry: Baseball's All-Time Greatest Fielders Revealed (Oxford University Press 2011).

Top photo via Getty

1 Of course, with the offensive explosion beginning around 1993 and ending a few years ago, reaching 500 career home runs no longer guarantees a spot in the Hall of Fame, but it certainly at the time all six current Hall of Famers retired.

2 To be clear, I think the WAR websites are fantastic. The first developer of WAR, Sean Smith, deserves the thanks of baseball fans everywhere. Published WAR estimates, particularly for seasons since about 1950, generally give good estimates of overall value. That being said, estimates of defensive value since 2003 are based on privately collected, non-published estimates of the location and trajectory (or speed) of batted balls recorded after watching and re-watching videos from televised broadcasts. Estimates of fielding value for seasons from about 1950 through 2002 are based on public data, and the general principles for using such data are described on the websites, but many aspects of the calculations are not, so the numbers are not reproducible. For seasons before 1950, the basic principles behind the calculations are pretty much unknown. I also am not aware of how the "line" defining replacement value is determined. I believe that all of the offensive metrics are based on models that have been around for many years, but I'm not even sure about the exact formulas used for the offensive portion of WAR.

3 As Moneyball made plain to millions, batting average is actually not a very good measure of a player's contribution at the plate: walks are ignored, and home runs, triples and doubles are not valued any more than singles. Instead, on-base percentage (OBP), which counts the ability to draw walks, and slugging percentage (SLG), which gives equal weight to each base rather than to each hit (e.g., twice as much credit for a double as for a single) are more informative.

Thirty years ago, the great baseball statistical analyst Pete Palmer proposed simply adding a batter's OBP and SLG. The resulting number, On-base Plus Slugging (OPS) has a much stronger correlation with batting average with run-production at the team level and is even sometimes shown on televised baseball games. However, it turns out that a weighted average of OBP and SLG has an even higher correlation with run-production: Gleeman reported the optimal weighting as 1.8 for OBP and 1.0 for SLG.

What Gleeman figured out is that if you take the sum of 1.8 times OBP and 1.0 times SLG and divide it by 4, you get a number that 'scales' like batting average.

For lower-scoring periods of baseball history, such as the last couple of seasons and perhaps the entire period from the Second World War through 1992, GPA matches up with batting average somewhat better if you multiply OBP by 2.0 rather than 1.8. In that case, you can practically calculate GPA in your head: just take half of SLG, add OBP, then take half of that. So if you saw a player this season with, say, a .500 SLG and .380 OBP, his GPA would be half of .500, or .250, plus .380, or .630, cut in half to yield .315. I have also found some evidence that a 2-to-1 ratio between OBP and SLG may actually better predict run-production than the 1.8-to-1 ratio.

4 For those of you familiar with weighted on-base percentage (wOBA), the correlation between GPA and wOBA, when rounded to two decimal places, is almost perfect. For example, the correlation between GPA and wOBA for all 2014 players who had enough plate appearances to qualify for the batting title is .99. For all players who had at least 1,000 plate appearances over the past 20 seasons, the correlation between their GPA and wOBA over that period is 1.00.

5 Palmer also created "Fielding Linear Weights," but the formulas were based on intuition, rather than mathematical modeling, were never verified in any way, are not integrated with pitching and have been shown to be subject to significant biases.

6 DRA essentially estimates the number of successful fielding plays a fielder 'should' have made at his position if he were average; the difference between the plays he actually made and 'should' have made—i.e., his net "skill" plays—is multiplied by the estimated runs per successful play to estimate runs "saved" (or if negative, "allowed") compared to the league-average fielder. The DRA estimates of runs "saved" or "allowed" by each fielder and pitcher (where pitcher "plays" are walks, strikeouts, etc.) on a team add up to a team estimate of runs "saved" or "allowed." The DRA estimate of team runs allowed each season is just about as accurate as Palmer's Linear Weights estimates of team runs scored each season.

7 For these calculations, I'll take the sum of "Rbaser" and "Rdp" as reported by Baseball-Reference.com and divide that by the value in runs of converting an out into a single, which is the sum of the value of an out (about .27 runs) and the value of a single (.47 runs), or .74 runs.

8 The only difference is that we don't have exact counts of ground balls by batter hand, so this must be indirectly estimated using regression analysis.

9 The reasons park effects should generally be minimal for fielders are pretty simple. The dimensions of infields are identical, and now that artificial turf has mostly disappeared, the surfaces on which ground balls bounce are essentially the same. Outfield dimensions are fairly standard as well, at least compared with parks before the 1970s. Left field in Fenway is very small, so a lot of potentially field-able high fly balls to left go over the Green Monster that would be caught in other ball parks. In Coors, the sheer area of the outfield is so vast (because the fences are set back) that the relative percentage of balls hit in the air to the outfield that drop in goes up.

10 I began writing this article at the beginning of the 2014 season, so I only had complete data through 2013. For purposes of estimating Jeter's singles allowed in 2014, I have assumed the same park factor calculated through 2012.

11 Note that due to rounding of the various components of per-season estimates, the grand totals due not exactly add up. The 2014 numbers are estimates; the open-source data I use is provided by Retrosheet.org a couple of months after the end of each season. The final numbers will have no material impact on the conclusions reached in this article.

12 I have the feeling the entirely deserving Hall of Famer Barry Larkin eventually made that "decision", and was justified in doing so, because his team was much worse off when he was injured. As mentioned in Wizardry, legendary Brooklyn Dodger Pete Reiser should have taken it easier in the field to stay healthy, but destroyed himself running into outfield walls.

13 The Yankees signed Jeter after the 2000 season to a ten-year, $189 million contract. One could fairly ask why they would sign him for so much money if they realized how bad he was in the field. My guess is that they knew he was bad, but couldn't believe he was quite as bad as indicated above, and focused on three things: 1) they might be able to minimize the impact of his fielding using the pitching staff and perhaps some clever grounds-keeping, 2) they could still count on him to be a durable, well-above-average player, and 3) they were, above all, in the entertainment business, and Jeter was by far their most marketable product.

14 This may have implications for evaluating infielders throughout history. As mentioned above, the consensus view has been that park factors for infielders are not material, and the statistical relationship throughout each large sample of major league history between pitcher ground ball fielding plays and shortstop ground ball fielding plays is so weak that it seems reasonable to me to assume pitcher fielding can be ignored when evaluating shortstop fielding. It may be the case that only Jeter was bad enough in the field for a sufficient stretch of time (but good enough at the plate) to cause a team actually to make a long-term commitment to altering its pitcher fielding and park conditions to help him. If Jeter was truly unique in that respect, we can continue to ignore parks and pitcher fielding for everyone else. Perhaps someone can do the research to back up this working assumption.

15 For the real stat-heads among you, the whole point of "batted ball data," which is privately collected estimates of the location and trajectory or speed of each batted ball, and which is discussed at length in part B of the technical appendix, is to reduce the random variation in "fair" fielding opportunities.

You do this by subdividing the total of 2000 ground balls (already split for batter hand) into location-speed buckets. You can get very far just by creating three buckets: (i) all ground balls, given batter-handedness, hit in a direction and at a speed that no shortstop would ever catch ("no-chance ground balls"), (ii) all ground balls, given batter-handedness, hit in a direction and at a speed that all major league shortstops would convert into except in the case of gross errors ("no-doubt ground outs") and (iii) all the rest, split by batter hand (true chances subject to chance, or "chancy chances").

Assume that for the typical distribution of 2000 ground balls 'faced' by the average shortstop, there are 1550 no-chance ground balls, 350 no-doubt ground outs, and thus only 100 chancy chances, of which the average shortstop makes 50. Then the standard deviation in expected plays would be the square root of (1/2) × (1/2) × 100, or +/− 5 plays, rather than +/− 18 as before. Of course, that is only true if we know the actual number of no-doubt ground outs and the number of chancy chances. But the number of no-doubt ground outs and chancy chances is itself subject to chance! The standard deviation could be brought even further down by subdividing the chancy chances into categories of 'low', 'moderate' and 'high' probability chances.

Unless you have unbiased batted ball data, you can't calculate the base-case number of no-doubt ground outs and lower bounds for the 'noise' in estimated net skill plays. Nevertheless, I'm inclined to think the maximum standard deviation in expected plays per season for shortstops is probably lower than 18. The standard deviation goes up the closer to 50% the success rate is and the higher the number of maximum possible chances. The absolute maximum number of ground balls that even the best shortstop in the last twenty years had any chance to field in any season could not have exceeded 800. Assuming the worst shortstop facing those chances would have caught only 50% yields a maximum standard deviation in plays made of something closer to the square root of .5 × .5 × 800, or 14. The typical standard deviation would be even lower—perhaps as low as 10.

16 Per the prior footnote, the standard deviation in Jeter's plays made during his bad seasons is closer to what I think the likely standard deviation in observed net plays attributable to random variation in the type of ground balls faced, which I think supports the case that that Jeter was genuinely okay in 1996 and 2004-06, and genuinely bad the other seasons, perhaps for the plausible reason offered.

17 Carrying on with the theme of the prior two footnotes, if over the long haul, and after the DRA adjustments, Jeter faced a fair number of no-doubt ground out opportunities and faced 100 chancy chances for each of this 18 season, or 1800 chancy chances, the standard deviation in expected plays for his whole career would be 21. Of course, that begs the question of the variation in the number of no-doubt ground outs and chancy chances ...

18 Multiply each single by the sum of the value of a single (about .47 runs) and the value of an out (about .27 runs).

19 "Def" runs shown in the WAR calculation seem to be not runs allowed relative to the average shortstop, but such number further adjusted for the fact that shortstop is the most difficult position to field.

Related

- Top MLB Props and Picks for Saturday May 30th's Biggest Matchups

- MLB Betting Picks for Friday, May 29: Phillies and Marlins Featured

- UFC Macau Best Bets and Fight Predictions for May 30

- MLB Picks Today: Best Pitcher Props for Friday’s Baseball Slate

- Top MLB Picks for Thursday: May 28 Baseball Betting Predictions and Props

- MLB Betting Picks for Wednesday: Two Totals for May 27 Slate

- MLB Picks and Predictions: Why Chase Burns and the Nationals Offer Betting Value