4 To 6 Weeks, My Ass: Why NFL Injury Estimates Are Bullshit

Not long after Robert Griffin III hobbled out of Week 2 with an ankle injury, the team and the media went into their usual casualty-reporting routine. First was the wait for the diagnosis, which arrived the next day—a dislocated left ankle. Then came the dissemination of the team's early timetable for the player's return. "We'll know in a few more weeks how long he'll be out," Washington coach Jay Gruden said on Sept. 15. "He's going to put it in a cast for a couple weeks ... but we just don't know how long the recovery time is as of now." A few days later, the estimate hardened into a more certain-seeming range. "It's just going to be 10 days in a cast," Gruden said, "and then we'll look at it from there. And it'll be a rehab process, probably four to six weeks, somewhere in there."

There it was. Four to six. If you're an NFL fan, those digits are virtually yoked together in your mind, like some sort of super-numeral that signifies "serious injury but healthy in time for the playoffs." Four to six tells a little story about the NFL. It says that in a sport of explosive contingency, the toll is knowable, containable; it exists within established parameters. It doesn't, though—not really. Although we eventually found out that Griffin had an exceedingly rare type of dislocation that didn't damage the surrounding tendons, given what we know about dislocated ankles in the NFL a prognosis of four to six weeks was at the time obviously insane. But it's an odd thing about the business of professional football that misinformation is spread as a matter of course by and among and about people who know it's not the truth but who for various reasons decide to play along anyway.

The Database

It's strange, in a way. In a world where I can easily look up how many touchdowns Frank Gore scored in 2013 when the 49ers were up by between one and eight points (four TDs), there is no place for me to look up a detailed history of Gore's injuries, even for the ones reported publicly. Injuries, particularly in the NFL, are one of the few topics in sports where old rules of thumb like "out four to six weeks" are still thrown around without anyone checking them against any data, in large part because no such database exists. So I built one.

Having done this, I can tell you that Gore has played through at least three ankle sprains, one knee hyperextension, and one bruised rib since the start of the 2011 season, all without missing a single game. I can also tell you that despite what injury reports might say, that isn't all that uncommon in the NFL.

In other ways, though, this is perfectly normal—in what world should any schmuck with a Wi-Fi connection be allowed to pry into what are in effect confidential medical records? That's the largest part of why the database, as it's constructed, is incomplete. One of my primary sources for finding player injuries to research and index is fantasy football media coverage, which of course focuses primarily on offensive skill players. Nobody really cares so much when a swing tackle misses a game or two, so those injuries are tougher to find. I've taken efforts to make sure I'm not overlooking defensive and non-skill position injuries, but it's still a potential flaw, and it's acknowledged.

Also, in an attempt to focus on the signal, I've tried to tune out some of the noise. In my case, that has meant focusing on the times that players actually miss games, not just when they nurse an injury (or more commonly, the team nurses the time they can report an injury), are limited in practices, and then still play on Sunday. I don't care one bit about Tom Brady's chronically sore shoulder that has kept him on the injury report since he was but a wee lad. Partially because I'm a one-man operation, I've tried to focus on the time period of about 2010 to present. I'll dig a little further back if it's a rare injury or something worth noting, but most of my data are very recent. That could be viewed as a limitation, but it also means that my data are applicable to modern sports medicine—I'm not using antiquated Jerry Rice-era ACL reconstruction timelines in my database. Also, with the rise of fantasy football and online sports betting, sports reporting has gotten much deeper, and it is now easier to get clear and specific injury information.

I should also note that I mark re-injuries as continuations of the initial injury. For example, DE/OLB LaMarr Woodley had a rough 2011 season due to a recurrent hamstring injury. He missed four weeks, returned to play for one week, missed one week, returned to play for one week, and then missed the final two weeks of the season. For the purpose of this chart, since this was all from the same injury, I treat this as Woodley having missed exactly seven weeks with his injury. I represent these re-injuries in this fashion because I think it gives a clearer picture of the true risk.

Perhaps the biggest potential flaw lies in the competitive nature of football itself. Teams and players are rarely honest and forthcoming about the severity of injuries. Even when there is an attempt to be honest, it's still pretty common to get multiple conflicting reports as to what the exact diagnosis or treatment is. Plenty of teams, like the Patriots, flat-out refuse to clarify an injury beyond a vague body part such as "ankle" or "hip." Having said all that, the only response I can really give is that I have attempted to address these flaws, and I have been as honest and accurate as I know how to be.

What Makes An NFL Injury Database Useful

The question that always comes next is, "What is the value of this database?" Historical injury data are not exactly predictive of future injury recoveries—but they're not a bad start, either. I find the historical record to be a far more accurate predictor than the usual media heuristics that are applied to each new injury. An easy example here is Aaron Rodgers and his 2013 collarbone fracture. This example is near and dear to me, as it was one of the inspirations for my database and blog, QuestionableToStart.com. In a Nov. 4, Week 9 game, Rodgers broke his left, non-throwing collarbone. Almost right away, the sports media seemed to agree that Rodgers would miss about three weeks. Some hedged it by saying things like "at least three weeks," but the three-week number always seemed to be present somewhere in the estimate. Rodgers himself fueled this by saying he might be able to play in the Thanksgiving game against Detroit, only 24 days after he'd been injured.

Out of curiosity, I started looking at other players with fractured collarbones. Turns out there was an easy comparison just sitting on the shelf. In Week 7 of 2010, Tony Romo fractured his left, non-throwing collarbone. Most of the media reports at the time put the timetable for Romo's return at between six and eight weeks. Romo sat out eight weeks while rehabbing, and then had some sort of a setback and was placed on injured reserve for the final two games of the season. Yes, there are all sorts of problems with this comparison. It's a ridiculously small sample size. No two injuries are exactly alike. No two players heal exactly the same or at the same rate. We don't have access to medical information that might give us more details about either injury. Yes, yes, yes, and yes. But, even having said all that, were we to believe that Rodgers's injury was half as severe as Romo's, without any explanation as to why that might be? Were we to believe that modern medicine had somehow halved the expected recovery time within the last three years? Why was nobody comparing the two?

After building my injury database, I can tell you that I have no idea where that three-week estimate for Rodgers came from. Wide receiver Danny Amendola missed four weeks with a fractured collarbone in 2012; cornerback Antoine Winfield missed seven weeks with one in 2011; cornerback Charles Woodson sat out 10 weeks with the same injury in 2012. The best-case scenario was when WR Marques Colston missed only two weeks at the start of 2011, but in part that was because, unlike Rodgers and Romo, he'd gotten a plate surgically implanted on the bone. Also, a receiver like Colston or Amendola does not need the same range of motion or arm strength at his position, and he was slowly eased back into the rotation after those two missed weeks—a luxury quarterbacks don't share. All my data seemed to point to a much longer expected recovery for Aaron Rodgers. For those of you who don't know the end to that story, Rodgers ended up missing seven weeks, a number much more in line with the recent historical numbers I was finding.

What The Media's "Team Doctor" Estimates Miss

Aside from specific player anecdotes like Rodgers's fractured collarbone, this database can sometimes highlight the true complication risk of injuries.

In the above example of RG3, the extremely small number of players who have suffered a dislocated ankle made it easy to identify the pool. More typically, when a player is hurt, the media stick to their rules of thumb, fitting the injury into the accepted if imprecise framework. It's the simplest approach, after all. Team doctors don't do much talking to the press; coaches speak in a sort of sub-English when it comes to the health of their players; the players themselves often know less about their own bodies than their team; and fans, aware of the code, see injury timeframes less as a range of real possibilities than as a rough index of the team's concern. What we're left with, then, is Chris Mortensen shifting up onto one cheek and farting out a couple rounds of "4-to-6 weeks" before tossing it back to Wingo.

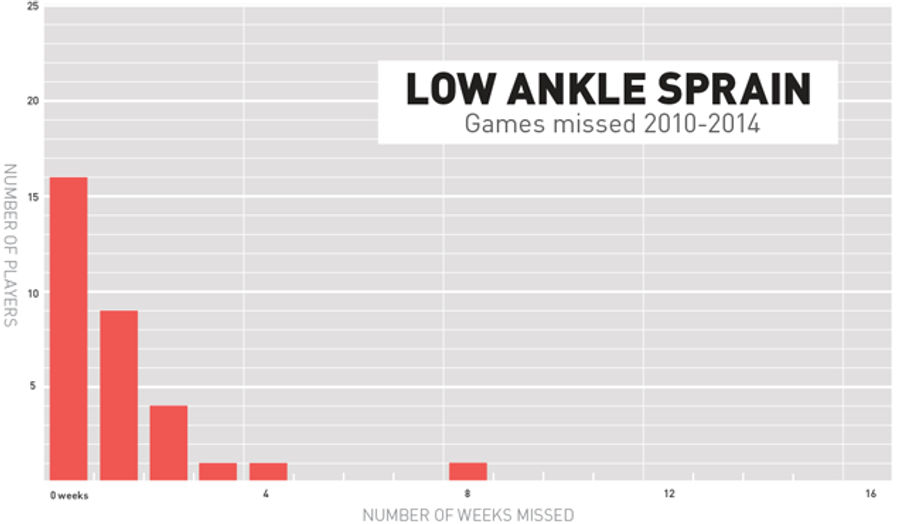

These heuristics take a one-size-fits-all approach to sports reporting, and we all know that one-size-fits-all anything rarely offers a comfortable fit. But sometimes, they fit well enough to give the illusion that they're based on something real. Take the common low ankle sprain as an example. Here's a chart of all the reported low ankle sprains since 2010, and how long it took each player to return to play:

For the most part, this is a simple injury, and the traditional heuristic more or less holds up. A reporter will say that a player with a regular ankle sprain will be out "maybe a week," and he or she would mostly be correct. A lot of players miss zero weeks, a lot miss one week, but there aren't too many high-risk complications as we move to the right of that. Also, due to the nature of my data collection, it should be noted that there are most likely far more cases of players who missed exactly zero weeks—just a glance at the numbers suggest this is merely a fraction of the sprains suffered in the league.

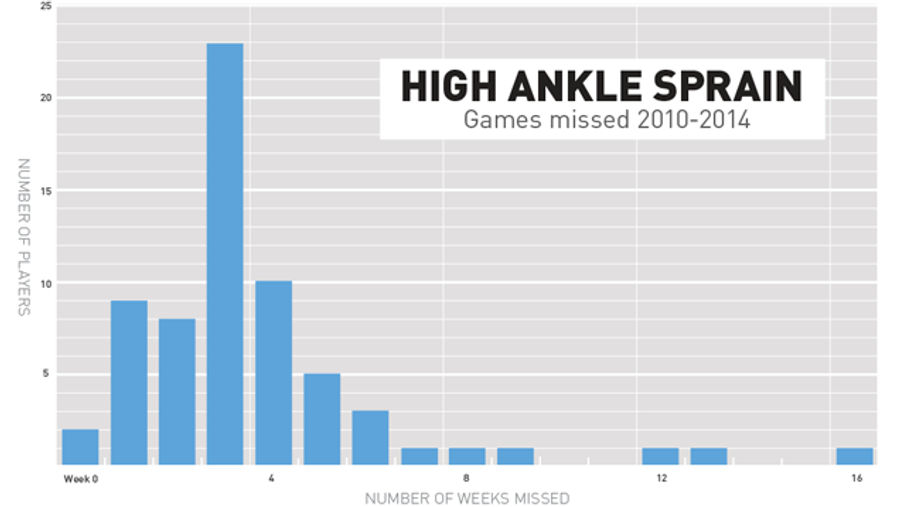

On the other end of the risk spectrum, let's take a look at the dreaded high ankle sprain. For those of you who don't live in the strange world of NFL injuries, the high ankle sprain is much more severe than the regular low ankle sprain that you and I get when we forget our age and try to break out the ol' spin move at the gym. The high ankle sprain affects the stability of the ankle and the lower leg, and in some ways it is more akin to a fractured lower leg than to a traditional ankle sprain. (Unlike the simple ligament damage in a typical sprain, the ligaments injured in a high ankle sprain are the ones that hold your two lower leg bones—tibia and fibula—together and keep them attached to your ankle. If the injury is severe enough, those two lower leg bones can move independently in ways they aren't designed to, thus causing all sorts of ruckus. It's a structural issue, and severe high ankle sprains often require surgery.) Here is a chart showing the return-to-play timelines of 66 players who have suffered this injury since the 2010 NFL season:

As opposed to the low ankle sprain, media estimates on this injury are usually all over the board. They usually range from the optimistic "week to week" all the way to the more pessimistic "up to six weeks." Mostly though, they revolve around three weeks. This is where my database is really valuable, and, if I may say so, downright insightful. Yes, three weeks isn't a bad guess, as a full 23 players (35 percent of the group) came back after missing exactly three weeks. But the media estimates are missing out on the deeper story, which is that there is a lot more uncertainty here than they admit to. Some players will miss zero games or one game (though of the six offensive skill players who have tried, all but statuary Peyton Manning have had miserable showings or agrivated the injury). Some players will miss much more than six weeks, even up to the entire remaining season. Yes, these ends of the bell curve are small, and you can point out that we're talking about very small sample here (often only one of two players over the last few seasons), but these outliers do occur.

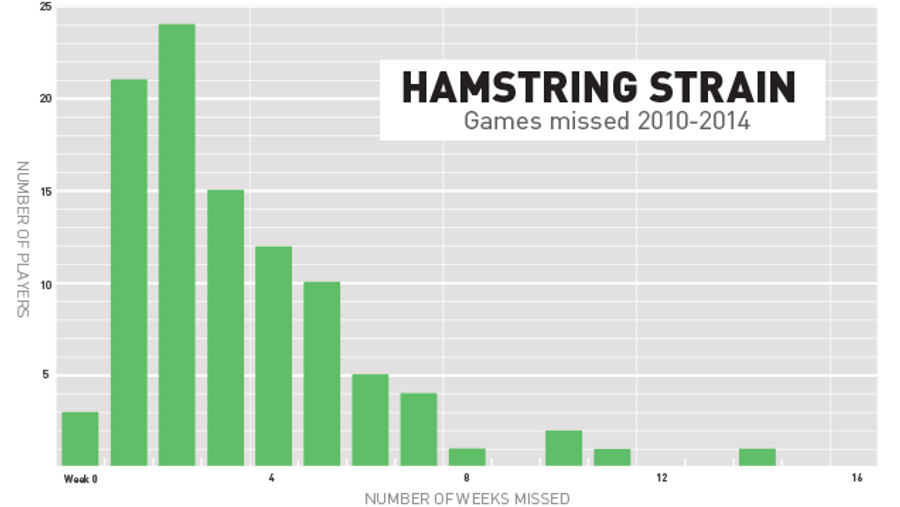

With other injuries, the media seem slow to admit that the player might have serious complications or high risks of re-injury. Hamstring strains and tears are a good example of this, as they can linger and lead to repeated and extended rehabs. A strain is when a muscle tears, and the tear can range from mild to severe. The severe end of that strain spectrum is a full tear, which is why I have lumped these injuries together. Typically, reporters are hesitant to say anyone will miss even one game with a hamstring injury and exceedingly hesitant to mention that it might be much more severe than that. Look at Roddy White, who last year missed three weeks (wrapped around a bye week, so four total) with a serious hamstring strain, was slowed up again this year by another hamstring injury. The early word on him ignored the range of hamstring injuries, and reporters doubled down on the notion that he would "no doubt" play in the following game. (He was declared inactive.)

Here's a chart of 99 players who have had reported hamstring injuries since 2010:

Here, I need to point out there is that my "zero weeks missed" column is way off. Due to the pitfalls that I mentioned before, the database has a blind spot for players who suffer injuries but never miss a game, and hamstring strains are common in that group. I'm working on that, but that's just how it is right now. Go ahead and imagine that "zero weeks missed" column to be ridiculously high, as tons of players would be in that category.

But ignoring that, we can see something interesting as we move to the right. Yes, as usual, the estimates aren't exactly awful. A lot of players miss zero weeks. A lot of them miss one week. A lot miss two weeks. But what the heuristics overlook is that a decent chunk of players are missing extended time with this injury. There is a significant pool of players who missed four, five, six, or seven weeks with this injury. Beyond that, there aren't many players who missed eight or more weeks … but there are a few, and they're largely ignored when people talk about the severity of hamstring injuries.

If my database serves any purpose, it's to bring those outliers into view. The best stats-based journalism, such as Nate Silver's work in politics, takes a probability-based approach and admits to margins of error. Good statistical analysis does not attempt to hide the tail-end risk or dismiss outliers, but to simply present them as the potentially rare events that they are. An injury database is a good step to reminding people of the violent uncertainty at the heart of the NFL.

Charts by Sam Woolley / Top image by Sam Woolley / Jim Cooke

Craig Zumsteg runs the Questionable To Start blog and database. He currently lives in suburban New York, where there are a surprising number of skunks.

Related

- Best NBA Betting Picks and Predictions for Monday April 6th

- National Championship Bet Pick: Why Michigan Has the Edge Over UConn

- UFC Vegas 115 Betting Picks: Moicano vs. Duncan Headlines April 4th Card

- NBA Betting Picks April 4th: Three Best Bets for Saturday's Slate

- Michigan vs. Arizona Bets: Wolverines Hold Edge in Final Four Showdown

- Best NBA Betting Picks Today: Friday April 3rd Expert Predictions

- MLB Pitcher Props Today: Best Baseball Bets for April 3rd