The SEC Really Does Benefit From Media Bias In Polls

This past week, four of the top five teams in the Associated Press College Football Poll hailed from the SEC West Division. Nebraska coach Bo Pelini, among others, wondered aloud whether ESPN's ownership of the brand-new SEC network, which launched this year, might be responsible for such a coincidence.

"I don't think that kind of relationship is good for college football," Pelini said at his news conference. "That's just my opinion. Anytime you have a relationship with somebody, you have a partnership, you are supposed to be neutral. It's pretty hard to stay neutral in that situation."

As a PAC 12 fan, I often wonder about East Coast bias in college football, especially around Heisman season. For example, was Andre Williams of Boston College really more deserving of the award than Ka'Deem Carey of Arizona? Most of the west coast would beg to differ. This, I argue, translates to the college football rankings themselves being biased toward and against certain conferences. As an Arizona State fan, it's particularly frustrating to see my team consistently ranked worse in the AP poll than any advanced metric would suggest.

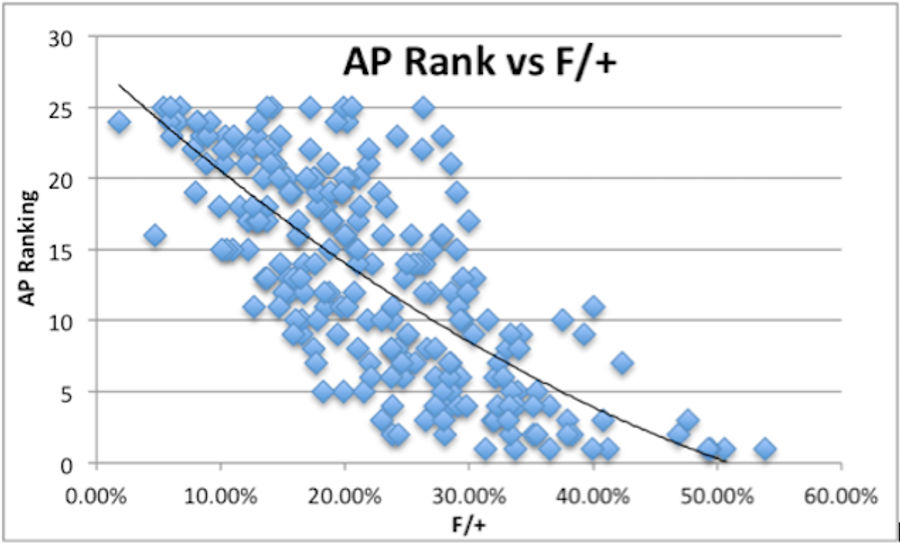

To see if these suspicions are valid, I have compared the AP college football rankings dating back to 2005 to the Football Outsiders's stat F/+ for the season's top 25 teams. F/+ is a combination of the Fremeau Efficiency Index and the S&P+ Ratings. Combined, these stats account for just about everything in college football on a play-by-play level including: play success rate, EqPts per play, drive efficiency, and opponent adjustments. It is probably a fair to assume that F/+ is the best quantitative measure of team skill that exists at this moment.

Below, I have fit a second-degree polynomial curve with heteroskedasticity-robust standard errors to the data to approximate the average AP ranking for each F/+ rank. Which team has the best F/+ rank since 2005? The 2011 Alabama Crimson Tide that massacred LSU in the National Championship game had a rating of 53.9%.

With this graph in place, I decided to test whether conference bias exists in the AP rankings. To do this, I made dummy variables for each conference (including the Big East, may it RIP). All teams that weren't in a power conference were placed in the Not Power Conference category. Also, since many teams have shifted conferences over that timespan, I have simply placed them in the conference in which they played the particular season. So for example, Missouri was a Big 12 team until 2012 when it joined the SEC, TCU didn't join Big 12 until 2012, etc.

| Conference | AP Ranking Bias |

| SEC | -.349 |

| PAC12 | 1.150 |

| BIG12 | 1.076 |

| BIG10 | .327 |

| ACC | 2.629 |

| Big East | -.249 |

| Prestigious | -1.440 |

I dropped the non-Power Conference dummy to avoid perfect collinearity, leaving us with the above table that shows the effect of conference on AP ranking. It is very clear in this case that other conferences are discriminated against when compared to the SEC. Every single coefficient for conference dummies is more positive than SEC, which suggests that being in any conference besides the SEC will lead to a worse ranking in the AP Poll when controlling for F/+.

These results show that a team from the PAC 12 is on average ranked approximately 1.15 spots worse than an equivalent team in a non-power conference, and 1.50 positional spots worse than the same team from the SEC. The former Big East conference also experienced bias compared to non-power conference teams ( East Coast bias anyone?), although not to the same extent as the SEC. The largest bias appears to occur on teams from the ACC, where teams are ranked about 2.63 spots worse than expected and this coefficient is statistically significant at the 1% level.

In addition to conference bias, I have found support for a "program prestige bias" whereby the historically good programs Oklahoma, USC, Ohio St, Notre Dame, and Alabama are ranked better in the AP than the advanced metrics would recommend. The last line in the table shows how these teams on average are ranked 1.44 spots better in the AP Rankings than F/+ would predict. Also interesting to note, these results are very nearly statistically significant at the 10% level.

While these results are rather interesting, they aren't thoroughly scientific because of the lackluster p-values. To tighten the confidence intervals, I would need more data to work with. Unfortunately, the Football Outsiders's F/+ data only goes back to 2005 and there doesn't appear to be week-by-week F/+ data. Since these are the final AP rankings that account for the inter-conference play of bowl games, I would expect the midseason AP Rankings to be even more biased.

What teams are hurt most by conference bias? Based on F/+ ratings and a polynomial of best fit, we can predict where a team should be ranked according to F/+ and look at the difference between that predicted figure and the actual AP Ranking of teams with multiple seasons in the Top 25.

As I expected, my Sun Devils do experience ranking bias, although I surely didn't expect them to be the most biased against in college football!

| School | Bias Per Season |

| Arizona State | -5.669 |

| Nebraska | -5.579 |

| Tennessee | -4.095 |

| Florida State | -3.892 |

| Ole Miss | -3.452 |

| Miami | -2.957 |

| Texas Tech | -2.830 |

| Clemson | -2.824 |

| Oregon State | -2.771 |

| Texas A&M | -2.289 |

| Oklahoma State | -2.011 |

| Michigan | -1.816 |

| Iowa | -1.262 |

| Virginia Tech | -0.813 |

| BYU | -0.407 |

| Boston College | -0.365 |

| Cincinnati | -0.305 |

| Alabama | -0.263 |

| Vanderbilt | -0.108 |

| Louisville | -0.101 |

| Oklahoma | 0.046 |

| Wisconsin | 0.219 |

| Baylor | 0.223 |

| Missouri | 0.353 |

| Penn State | 0.412 |

| Boise State | 0.437 |

| California | 0.454 |

| Stanford | 0.485 |

| USC | 0.567 |

| Central Florida | 0.570 |

| Florida | 0.704 |

| UCLA | 0.996 |

| Kansas State | 1.066 |

| West Virginia | 1.376 |

| TCU | 1.380 |

| Oregon | 1.458 |

| Georgia | 1.545 |

| South Carolina | 1.759 |

| Georgia Tech | 1.865 |

| Michigan State | 1.900 |

| LSU | 1.987 |

| Notre Dame | 2.058 |

| Arkansas | 2.065 |

| Texas | 2.267 |

| Auburn | 2.591 |

| Ohio State | 3.369 |

| Utah | 5.144 |

This post originally ran at the Harvard Sports Analysis Collective. It is reprinted here with permission.

Austin Tymins is a sophomore at Harvard studying economics and statistics. He researches and writes primarily about collegiate and professional football. Follow him on Twitter @ATymins

Related

Why NBA's Proposed Lottery Changes Won’t Fix Tanking Issues

Cleveland Browns Need To Move on From Deshaun Watson Era

Duke’s Collapse vs UConn Adds to Troubling March Pattern

NBA Best Bets Today: Top Betting Picks for Monday March 30th

- NBA Best Bets Today: Top Betting Picks for Monday March 30th

- Michigan vs Tennessee Prediction: Why Wolverines Are the Elite 8 Best Bet

- Top NBA Bets Today: Expert Picks for March 29 Slate

- UFC Seattle Predictions: Adesanya vs Pyfer Main Event Betting Picks and More

- Arizona vs Purdue Elite 8 March Madness Betting Picks, Prediction

- NBA Picks for March 27: Best Bets for Friday Night Slate

- Why St. John's Can Cover Sweet 16 Spread Against Duke